īecause in case of docker we are defining the port in daemon.json and same port as is defined as target in the Prometheus.yml and getting the data related to memory and CPU utilization So kindly request you to suggest me on this. The second role, tasks, represents any individual container deployed in. It can be used to automatically monitor the Docker daemons or the Node Exporters who run on the Swarm hosts. The first role, nodes, represents the hosts that are part of the Swarm. Node Exporter metrics visualization in Prometheus & Grafana3. May I know if I am on right path for collecting the CPU and memory utilization data of the LXC container? If not please suggest me the right way to collect the CPU and memory data of LXC container on Prometheus. The Docker Swarm service discovery contains 3 different roles: nodes, services, and tasks. How to configure Node exporter within Prometheus 2. Second which is running inside the container to scrap the process related data.One which is running outside the container which is running on my hist to scrap the LXC CPU and memory data.Now, coming to the process monitoring if I run the node_exported inside the container then there comes multiple instances of node_exporter for prometheus to scrap the log ie. For more information on collectors, refer to the collectors-list section. The nodeexporter itself is comprised of various collectors, which can be enabled and disabled at will. My Prometheus.yml File looks like this.: - job_name: node The component embeds nodeexporter which exposes a wide variety of hardware and OS metrics for nix-based systems. I am mannually running the node exporter binary on my host “./node_expoerter” and then in mapped the LXC target to the node of LXC to 9100 where node_exported is collecting the data. Later on reading few articles I am used node_exporter. Mt khu v ti khon mc nh l admin:admin sau khi ng nhp ta chn Dashboard > Manage > Import chn import via in id dashboard l 1860(node-exporter) v 193(cadvisor) sau click Load v in name, folder, datasource prometheus nh sau. This article shows you how to build a Prometheus container image and set up the Prometheus Node Exporter to collect data from home computers. I have my LXC container running on my Ubuntu-20.04 I tried to get the LXC info using Prometheus by defining the LXC job in the prometheus.yml file. Executing GPU Metrics Script: NVIDIA provides a python module for monitoring NVIDIA GPUs using the newly released Python bindings for NVML (NVIDIA Management Library). Because Prometheus is not scrappimg the LXC CPU and memory utilization info without node_exporter running on my host. This script will bring up 3 containers in sequence Pushgateway, Prometheus & Grafana. We strongly recommend configuring a separate user for the Grafana.

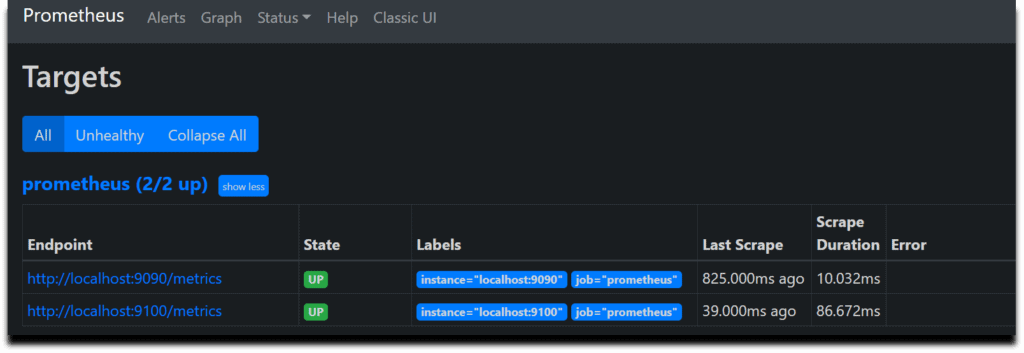

That’s because this exporter does not collect metrics from multiple nodes. Note: For this integration to work properly, you must have connect each node of your MongoDB cluster to an agent instance. Sorry for the long post.īut why I am asking is currently I am running the node_exporter on my host to get the LXC related CPU and memory utilization info. The component embeds percona’s mongodbexporter. As you can see from the above output, the node-exporter service has three endpoints. Alerting: elastalert as a drop-in for Elastic.io’s Watcher for alerts triggered by certain container or host log events and Prometheus’ Alertmanager for alerts regarding metrics.Hello ok. Step 6: Now, check the service’s endpoints and see if it is pointing to all the daemonset pods.

Logging: Filebeat for collection and log-collection and forwarding, Logstash for aggregation and processing, Elasticsearch as datastore/backend and Kibana as the frontend.Monitoring: cAdvisor and node_exporter for collection, Prometheus for storage, Grafana for visualisation.The Try in PWD below allows you to quickly deploy the entire Prometheus stack with a click of the button. Here's a quick start using Play-With-Docker (PWD) to start-up a Prometheus stack containing Prometheus, Grafana and Node scraper to monitor your Docker infrastructure. This is an out of the box monitoring, logging and alerting suite for Docker-hosts and their containers, complete with dashboards to monitor and explore your host and container logs and metrics. I have successfully set up the data sources at Grafana and see the default dashboard with the following docker-compose.yml. A Prometheus & Grafana docker-compose stack.

The original (from the Github repository above) also draws annotations into the graphs by pulling user-defined log events and Prometheus alerts from an Elasticsearch (second screenshot). Includes templating for container groups. The screenshot should be pretty self-explanatory. The (simplified) dashboard used in this Monitoring/Logging/Alerting Suite:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed